Optimizing Amazon DynamoDB: Cost-Saving Strategies for Cloud Engineers

Introduction to DynamoDB and Cost Management Challenges

Amazon DynamoDB has emerged as a powerful and versatile fully managed NoSQL database service, offering seamless scalability, high availability, and sub-millisecond latency. However, with its widespread adoption, one major challenge persists: cost management.

While DynamoDB is serverless and scales automatically, the costs can escalate rapidly if not closely managed. DynamoDB pricing is deeply tied to its underlying infrastructure and access patterns. For cloud engineers and architects, understanding these pricing structures and optimizing their configurations is critical for reducing unnecessary expenses while maintaining performance.

It's essential to strike the right balance between performance, scalability, and cost-efficiency with DynamoDB. Without deliberate optimization, organizations may face excessive costs due to over-provisioning or inefficient database configurations.

The goal is to utilize DynamoDB’s features in ways that maximize cost savings without compromising on application performance or scalability. By carefully tailoring capacity modes, indexing strategies, and resource allocation, you can significantly reduce costs while maintaining the full functionality of your applications.

Understanding DynamoDB Pricing

At the core of DynamoDB's cost structure lies the choice between two distinct pricing models: On-Demand and Provisioned Capacity. Both models offer flexibility but differ significantly in how resources are billed and managed.

- On-Demand capacity charges are based on the exact number of reads and writes, which is ideal for unpredictable traffic patterns.

- Provisioned Capacity, conversely, is better suited for applications with steady, predictable workloads. It allows users to specify the read and write throughput in advance, and they are billed based on the capacity reserved, whether fully utilized or not.

Several additional factors affect the overall cost of operating a DynamoDB table, including:

- Amount of data stored: Primarily driven by the total volume of data held in the table and its indexes.

- Read/write throughput: Depends on the number of requests processed and their consistency requirements—eventually consistent reads are cheaper than strongly consistent reads.

- Global Secondary Index (GSI) usage: Allows queries on different attribute combinations, but incurs additional storage and read/write costs.

- Data transfer between regions: Transfers across regions or to external services like S3 will incur additional charges.

- Backup and restore: Adds data protection but also incurs storage and processing fees.

- Global tables: Synchronizing tables across multiple regions for disaster recovery or low-latency access can significantly increase costs.

To further complicate the pricing structure, DynamoDB charges for write operations at a higher rate than reads, making write-heavy workloads more expensive.

When tasked with optimizing costs, understanding these factors is the first step toward minimizing DynamoDB expenses. Selecting the right pricing model based on your application’s usage pattern can have a profound impact on the total cost.

Provisioned Capacity: Optimizing Read/Write Throughput

Provisioned capacity allows you to specify the throughput capacity of your DynamoDB tables in terms of read and write units. While it provides predictability in cost and performance, improper allocation of these units can lead to inefficiencies. Under-provisioning results in throttling, where requests are rejected due to insufficient capacity, impacting user experience. Over-provisioning, on the other hand, wastes resources and incurs unnecessary costs.

To optimize provisioned capacity, cloud engineers must continuously monitor the usage patterns of their DynamoDB tables and adjust capacity as needed. Furthermore, rightsizing throughput based on actual demand is a best practice to avoid both underutilization and over-expenditure. You can leverage historical data and usage patterns to better anticipate capacity needs and make informed decisions regarding the allocation of read and write units.

Auto Scaling for Provisioned Capacity

Auto scaling plays an essential role in DynamoDB because it dynamically adjusts your table's read/write throughput capacity based on incoming traffic, helping to maintain performance while controlling costs. It ensures that you neither overpay for unused capacity nor experience throttling due to under-provisioning.

You still have to configure auto scaling policies with appropriate thresholds to ensure the database scales out only when necessary, avoiding sudden cost spikes. By setting upper and lower bounds for scaling, you can maintain fine control over how resources are allocated and how much you are willing to spend. Auto scaling also alleviates the burden of manual intervention, allowing for a more streamlined and automated approach to resource management.

However, auto scaling is not without its challenges. You must carefully set the scaling thresholds to avoid the unnecessary triggering of capacity increases. Misconfigured scaling policies can lead to cost inefficiencies, especially during periods of temporary spikes in traffic. By continuously refining scaling policies and thresholds, it's possible to maximize the benefits of auto-scaling while keeping costs under control.

On-Demand Capacity Mode: When It’s More Cost-Effective

On-Demand capacity mode is a relatively recent addition to DynamoDB, designed to handle variable or unpredictable workloads. Instead of pre-allocating read/write capacity, the On-Demand model charges based on the actual number of reads and writes performed. This mode is ideal for applications where traffic fluctuates significantly, and predicting future demands is difficult.

The key advantage of On-Demand pricing is that it automatically scales with your application's workload. You pay only for the reads and writes your application requires, making it a cost-efficient option for workloads with intermittent or sudden spikes in traffic. However, as usage grows, costs can become substantial if not closely monitored.

In practice, many organizations have successfully reduced their DynamoDB costs by switching to On-Demand capacity during periods of highly unpredictable traffic. On the other hand, for long-running, stable applications with consistent workloads, Provisioned Capacity may still provide better long-term cost benefits.

Indexing: Reduce Costs with Efficient Data Access Patterns

Indexes in DynamoDB—specifically Global Secondary Indexes (GSIs) and Local Secondary Indexes (LSIs)—can significantly impact cost. Indexes enable faster queries, but each index consumes additional storage and incurs additional read/write costs. While useful for querying data, each additional index increases storage and write costs, so only create indexes that are truly necessary for your queries.

- Local Secondary Indexes (LSIs) enable you to create an alternative sort key for querying data within a specific partition. LSIs use the same partition key as the base table but have a different sort key, which allows for querying the data using various sort keys without duplicating the entire table.

Think of LSIs as a neighborhood directory. Your main city phone book (base table) organizes contacts by last name within each neighborhood (partition). The LSI allows you to organize the same contacts by phone number, but only within each specific neighborhood.

- Global Secondary Indexes (GSIs) allow for querying across all partitions in a table. They have a partition key and sort key that can be different from the base table's primary key. GSIs are charged based on both storage and the provisioned read/write capacity dedicated to them, making them more expensive.

Think of GSIs as the city phone book (base table) where contacts are listed by last name. Using a GSI, you can create a separate phone book that organizes the same contacts by their phone numbers. You can look up contacts based on a completely different key, regardless of which neighborhood (partition) they live in.

Optimizing indexes means striking a balance between performance and cost. Common strategies include:

- Minimize the number of indexes, as each index added to a table can considerably increase storage and operational costs.

- Evaluate the actual query patterns before implementing additional indexes to determine whether those queries can be supported using existing indexes or data models.

- Optimize your queries such that they can be efficiently resolved using fewer indexes. In many cases, leveraging the primary key and sorting key in creative ways can eliminate the need for extra indexes.

Understanding and adhering to these best practices for DynamoDB data modeling can drastically reduce costs while maintaining efficient data access patterns.

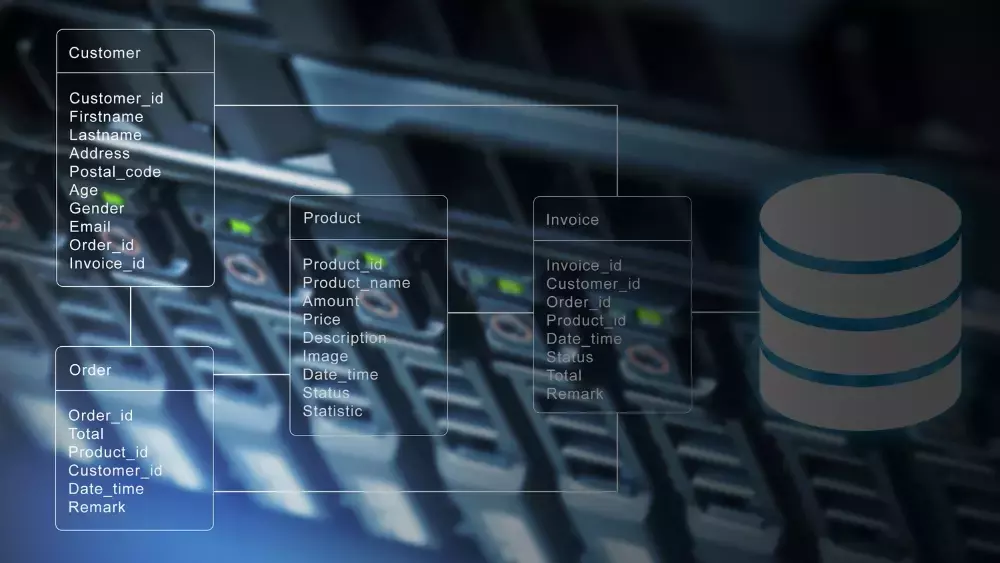

Data Modeling: Partition and Sort Keys

One of the most powerful methods to optimize DynamoDB costs lies in data modeling, which improves query performance and simplifies data retrieval. DynamoDB's unique architecture relies heavily on partition keys and sort keys to efficiently locate and organize data. Designing your tables to take advantage of these mechanisms is crucial for cost-effective operation.

- Partition keys are essential in distributing data across DynamoDB’s infrastructure. A well-distributed partition key ensures that no single partition becomes a bottleneck, avoiding hot partitions, which can lead to performance issues and increased costs. Aim for even distribution of data across partitions by choosing partition keys that minimize skew and ensure balanced traffic.

- Sort keys, on the other hand, help in ordering and filtering data within a given partition. Partition keys are required, but sort keys are optional. Without sort keys, your entire table will be scanned, which may be appropriate in some use cases, but should not be the default. By designing queries that take advantage of both the partition and sort keys, you can reduce the need for additional indexes, thereby lowering costs. For instance, by combining attributes such as date ranges or specific categories within the sort key, you can retrieve the required data efficiently without the overhead of Global Secondary Indexes (GSIs).

Furthermore, understanding when to denormalize data versus normalizing it is vital.

- Denormalization, where data is duplicated across multiple tables, can improve query performance and reduce complex joins, but it increases storage costs.

- Normalization reduces storage, but at the expense of more complex queries.

The choice depends on the specific workload — denormalization may be worth the storage cost in high-read scenarios, while normalization might be preferable for write-heavy operations.

By thoughtfully applying data modeling techniques and understanding the trade-offs between performance and cost, engineers can create more efficient, scalable, and cost-effective DynamoDB solutions.

Additional AWS documentation: DynamoDB table scans - developer guide

Streams: Balancing Performance and Cost

DynamoDB Streams provide a mechanism to capture table activity in real-time, enabling event-driven architectures and triggering downstream processes. While they offer powerful functionality, such as replicating changes to other systems or triggering AWS Lambda functions, they come at an additional cost that must be carefully managed.

Streams are charged based on the volume of data written to the stream. Therefore, frequent updates to large tables can lead to significant costs if not optimized properly. Cloud engineers need to assess whether the additional functionality provided by Streams justifies the cost in their particular use case.

To minimize costs, consider:

- Only enable streams on tables where real-time event capture is essential.

- Ensure that downstream consumers, such as Lambda functions, are efficient in processing stream records. Slow or inefficient consumers can increase costs, as DynamoDB Streams hold unprocessed records for up to 24 hours, during which time costs continue to accrue.

- Limit the volume of data sent to the stream is key. This can be achieved by carefully controlling which tables or specific changes are tracked, reducing unnecessary data ingestion, and optimizing the architecture to process only essential events.

TTL (Time to Live) for Automatic Data Expiration

Time to Live (TTL) is a built-in DynamoDB feature designed to automatically expire and delete outdated or unnecessary data from your tables. This functionality is excellent for controlling storage costs, as old or stale data can accumulate over time, increasing storage expenses without providing any value.

TTL works by marking individual items with an expiration timestamp so it will be automatically removed from the table without incurring additional read/write costs. You need to evaluate your data lifecycle and apply TTL to items that are no longer needed after a certain period. This is particularly useful for applications that deal with transient data, such as session tokens, logs, temporary records, or caching systems.

It is important to note that while TTL reduces storage costs, it may still incur some operational overhead as DynamoDB processes expired items. Monitoring the impact of TTL on your tables and fine-tuning the expiration settings can help in achieving optimal results without unintended side effects.

Monitoring and Analyzing Costs with AWS Cost Management Tools

Effective cost management for DynamoDB requires continuous monitoring and analysis. AWS offers several tools that provide insights into your DynamoDB usage and help in optimizing costs. By leveraging these tools, you can more easily track costs, identify inefficiencies, and make data-driven decisions to optimize your DynamoDB tables.

- AWS Cost Explorer is one of the primary tools for visualizing DynamoDB costs over time. It allows you to filter and group expenses by various dimensions, such as service, resource, and usage type. Cost Explorer tracks how changes in traffic, storage, or capacity modes impact costs, helping you identify areas where optimization can be applied.

- AWS Budgets allows organizations to set up alerts based on predefined cost thresholds. This proactive approach ensures you get notified so you can respond quickly to unexpected spikes before costs spiral out of control.

- AWS CloudWatch provides real-time monitoring of DynamoDB metrics such as read/write capacity, throttling events, and consumed capacity units. By setting up CloudWatch alarms, you can automatically scale resources or adjust configurations when certain thresholds are met, ensuring that cost-inefficient behaviors are promptly addressed.

Kalos by Stratus10, a comprehensive cost and security management platform for AWS, provides visualizations of your total AWS spend, broken down by database, compute, storage, network, and much more. Additionally, Kalos includes compliance checks for 15 frameworks - all from ready-made dashboards via a single source.

Monitoring tools are essential not only for tracking costs but also for ensuring the overall health of your DynamoDB tables. By using these tools effectively, you can maintain a cost-efficient database environment while delivering high performance and availability.

Additional Quick Tips to Reduce DynamoDB Costs

- Minimize the number of attributes in your table design: DynamoDB charges for data based on item size, which includes the total size of all attributes in each item. To reduce costs, minimize the number of attributes per item.

- Batch write operations: DynamoDB charges per write request, but batch operations can reduce costs by allowing you to group multiple write requests into a single API call. This minimizes the number of write units consumed, making the database more cost-effective, especially for applications with high write rates.

- Use cheaper storage: For tables with historical data that isn't frequently accessed, consider moving older data to cheaper storage solutions such as Amazon S3. By archiving infrequently accessed items, you can reduce DynamoDB storage costs significantly without sacrificing data availability.

DynamoDB Cost Optimization Case Studies: Real-World Examples

High-Traffic Application Reduces Costs with Autoscaling and Index Optimization

A leading e-commerce platform experienced significant traffic spikes during seasonal sales events, resulting in high DynamoDB costs due to over-provisioning of capacity. By implementing autoscaling, the platform was able to dynamically adjust its throughput based on real-time demand, ensuring that resources were only allocated when necessary. Additionally, the team optimized its Global Secondary Indexes by analyzing query patterns and reducing the number of indexes. These changes resulted in a significant reduction in DynamoDB costs during peak traffic periods.

Enterprise Saves Costs by Switching from On-Demand to Provisioned Capacity

The enterprise initially used On-Demand capacity to handle varying traffic loads for its customer-facing application. However, as the workload became more predictable, the cost of On-Demand mode escalated. After conducting an analysis of their traffic patterns, the company switched to Provisioned Capacity, carefully configuring read/write throughput based on historical data. The move led to a reduction in monthly costs while maintaining consistent performance.

These real-world examples underscore the importance of continuously assessing workload patterns and adopting the appropriate DynamoDB configurations to achieve cost savings.

Conclusion: Best Practices for Long-Term DynamoDB Cost Optimization

Optimizing DynamoDB for cost efficiency requires a deep understanding of its pricing models, capacity modes, and data structures. Cloud engineers must continuously monitor their databases, making adjustments as workloads evolve. Key strategies include selecting the appropriate capacity mode, leveraging auto-scaling, optimizing indexes, and utilizing features such as TTL to reduce storage costs.

Regularly reviewing the effectiveness of these strategies, while using AWS tools to monitor costs and performance, ensures that you maintain a cost-efficient yet powerful database infrastructure. As DynamoDB continues to evolve, new features and cost-saving opportunities will emerge. Staying informed about these developments and adapting to them is crucial for engineers who aim to maintain optimized and cost-effective cloud architectures.

Get Started: Reduce Your DynamoDB Costs Today

Optimizing DynamoDB requires a multipronged approach, and it all starts with clear visibility into your AWS usage. Kalos by Stratus10 delivers a comprehensive view of your AWS costs, so you can monitor your DynamoDB spend - in real-time and historically. Plus, with Kalos AI trained on your infrastructure, you get custom recommendations on how to reduce your DynamoDB costs, all based on your specific setup and usage.

Try Kalos by Stratus10 completely free for 14 days for a quick solution to lowering your DynamoDB costs!

Newsletter Sign Up

Resources

- AWS Tutorial: DynamoDB Tables, Items and Attributes (YouTube)

Explore tables, items, partition keys, and sort keys, and how they're used when querying or scanning your data. - Guide to AWS Cost Optimization

Stratus10's in-depth whitepaper on essential cost reduction strategies. - AWS Documentation:

Published 10/6/24

Call us at 619.780.6100

Email us at sales@stratus10.com

Fill out our contact form

Read our customer case studies

Explore our AWS consulting services